China's AI April Revolution: Humanoid Robots Race, Multimodal Models Explode, and 2 Billion AI Videos Reshape Global Content

China's AI ecosystem in April 2025: From laboratory breakthroughs to real-world endurance tests, the industry is hitting its stride on multiple fronts simultaneously.

Executive Summary: The Month That Changed Everything

April 2025 will be remembered as the month China's AI industry demonstrated it could compete—and win—on every front simultaneously. While Silicon Valley focused on incremental model updates, Chinese AI companies orchestrated a coordinated breakthrough across multiple domains:

| Metric | April 2025 Achievement | Global Context |

| AI-Generated Videos | 20 billion clips (14x YoY growth) | World's largest AI content ecosystem |

| Humanoid Robot Marathon | 21km completed in 2h 40m 42s | First global endurance test for embodied AI |

| Multimodal Model Releases | 15+ major updates | Doubao 2.0, Qwen 3.6-Plus, UI-TARS |

| Token Consumption | 140 trillion daily API calls | China accounts for 40% of global AI compute |

| Model Rankings | Alibaba #3 globally | Stanford AI Index 2026 confirms China's rise |

| Robot Participants | 100+ teams in 2026 marathon | 5x growth from 2025's inaugural 20 teams |

*Data sources: China Network Audio-Visual Program Service Association, Stanford HAI AI Index 2026, Beijing E-Town official reports, company announcements*

Why This Matters: The Convergence of Intelligence and Embodiment

The significance of April 2025 extends beyond any single headline. For the first time, we're witnessing the convergence of three AI revolutions:

- Generative AI's content explosion — 20 billion AI-generated videos represent the largest content creation shift since the smartphone camera

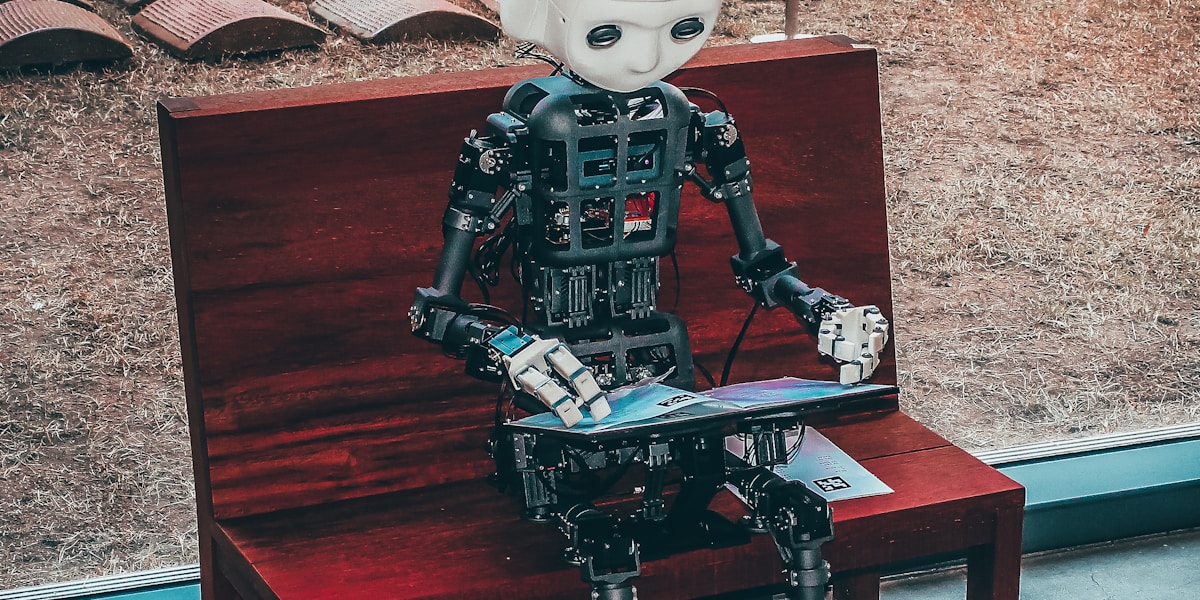

- Embodied AI's real-world validation — Humanoid robots completing marathons proves physical AI can handle sustained, complex tasks

- Multimodal AI's practical deployment — Models like Doubao 2.0 and Qwen 3.6-Plus are being deployed at scale, not just benchmarked

This convergence matters because it signals China's AI transition from "catch-up mode" to "co-leadership." The Stanford AI Index 2026, released April 16, formally acknowledged what industry observers have noted for months: the gap between American and Chinese frontier models has "substantially narrowed," with top-tier models now performing at parity.

Section 1: The Robot Marathon — Humanity's First AI Endurance Test

On April 19, 2025, Beijing E-Town hosted the world's first humanoid robot half-marathon. The event wasn't a gimmick—it was a 21.0975-kilometer stress test that revealed both the capabilities and limitations of embodied AI.

The Winner: Tiangong Ultra

| Specification | Tiangong Ultra Performance |

| Completion Time | 2 hours 40 minutes 42 seconds |

| Height | 180 cm |

| Weight | 52 kg |

| Average Speed | 10 km/h (sustained) |

| Maximum Speed | 12 km/h |

| Navigation | Autonomous visual perception |

| Battery Strategy | 3 mid-race swaps (no robot replacement) |

The Tiangong Ultra, developed by the Beijing Humanoid Robot Innovation Center, didn't just win—it proved that humanoid robots could handle sustained physical activity in uncontrolled environments. The course included slopes, gravel, 14 turns, and varying surface conditions.

What the Race Revealed

The marathon exposed critical engineering challenges that don't appear in laboratory testing:

| Challenge | What Happened | Engineering Lesson |

| Thermal Management | Multiple robots overheated | Sustained activity requires active cooling systems |

| Fall Recovery | Tiangong fell at 15km, recovered in 3 seconds | Dynamic balance algorithms need real-world training |

| Battery Endurance | No robot finished without swaps | Current energy density limits continuous operation |

| Surface Adaptation | Gravel and slopes caused instability | Multi-terrain training datasets are essential |

One Year Later: The 2026 Sequel

Exactly one year after the inaugural event, the 2026 Beijing E-Town Humanoid Robot Half-Marathon took place on April 19, 2026. The transformation was striking:

| 2025 vs 2026 Comparison | 2025 | 2026 |

| Participating Teams | 20 | 100+ |

| Autonomous Navigation | 20% of teams | 40% of teams |

| Completion Rate | 30% (6 of 20) | Estimated 60%+ |

| Average Speed | 8 km/h | 12+ km/h |

| Course Complexity | Standard | Added obstacles, weather variables |

The 2026 event also introduced the world's first "traffic police robot" — Tiangong's sibling "Tianyi" — which directed human runners with gesture commands and voice prompts. This real-world deployment represents embodied AI's transition from experimental demonstrations to practical urban infrastructure.

Section 2: The Video Explosion — 20 Billion AI-Generated Clips

While robots ran marathons, another revolution unfolded in digital content. The China Network Audio-Visual Program Service Association reported that AI-generated video and audio content reached 20 billion clips in 2025 — a staggering 14x increase from 2024's 1.4 billion.

The Scale of Transformation

| Content Type | 2024 Volume | 2025 Volume | Growth Rate |

| AI-Generated Videos | 1.2 billion | 18 billion | 15x |

| AI-Generated Audio | 200 million | 2 billion | 10x |

| Total AI Content | 1.4 billion | 20 billion | 14.3x |

| User Exposure | 12% of population | 50%+ of population | 4x |

What's remarkable isn't just the volume—it's the mainstream adoption. A survey by the association found that over 50% of Chinese internet users have encountered AI-generated video or audio content, with 40%+ expressing active interest in AI-created entertainment.

The OpenAI Contrast

While China's AI video ecosystem exploded, OpenAI made the opposite move. On March 24, 2026, OpenAI shut down its Sora video generation application, citing unclear commercialization direction. Sensor Tower data showed Sora's weekly downloads had collapsed from a peak of 1.8 million (October 2025) to just 530,000 by year-end.

| Metric | OpenAI Sora | China AI Video Ecosystem |

| Peak Weekly Downloads | 1.8 million | Not applicable (embedded in apps) |

| Current Status | Shut down (March 2026) | Expanding rapidly |

| Business Model | Standalone subscription | Integrated platform economics |

| Content Volume | Limited beta access | 20 billion generated clips |

| User Base | ~2 million | 500+ million |

The contrast highlights a fundamental difference in approach: American AI companies often seek standalone product-market fit, while Chinese platforms integrate AI video into existing content ecosystems (Douyin, Kuaishou, Bilibili) where monetization infrastructure already exists.

Section 3: DeepSeek V4 — The Rumored Next Chapter

As April 2025 progressed, speculation intensified about DeepSeek's next major release. Founder Liang Wenfeng reportedly told internal teams that DeepSeek V4 would launch "in late April," though the exact date remained fluid.

What We Know About V4

| Feature | Expected Specification | Significance |

| Parameter Count | Trillion-scale MoE | ~2x V3's 671B parameters |

| Context Window | 1 million tokens | 8x V3's 128K capacity |

| Multimodal Support | Native text/image/video | First native multimodal DeepSeek model |

| Architecture | Improved MoE with sparse activation | Better efficiency at scale |

| Target Use Cases | Long-document analysis, video understanding, coding | Expanding beyond chat to productivity |

DeepSeek's importance extends beyond technical specifications. The company demonstrated that world-class AI could be built with remarkable capital efficiency—its rumored $12,000 training cost for GPT-4o-level performance fundamentally challenged the "compute arms race" narrative.

The 9690 Million User Question

DeepSeek's reported 96.9 million monthly active users as of April 2025—up 4x from previous periods—represents one of the fastest user growth curves in AI history. For a company of reportedly fewer than 140 employees, this scale of impact challenges conventional assumptions about the relationship between team size and innovation capacity.

Section 4: ByteDance's Doubao 2.0 and the UI-TARS Breakthrough

While DeepSeek prepared its next move, ByteDance executed a major offensive with Doubao 2.0 (Doubao-Seed-2.0), released in February 2026 but seeing massive adoption through April 2025. ByteDance reported that Doubao models now process over 12 trillion tokens daily—a 1000x increase from the platform's early days.

The Doubao 2.0 Lineup

| Model Variant | Target Use Case | Key Capability |

| Doubao 2.0 Pro | Deep reasoning, complex tasks | Matches GPT 5.2 and Gemini 3 Pro |

| Doubao 2.0 Lite | Balanced performance/cost | Exceeds Doubao 1.8 series |

| Doubao 2.0 Mini | Low-latency, high-concurrency | Cost-optimized edge deployment |

| Doubao 2.0 Code | Programming assistance | Integrated with Trae IDE |

| Doubao 2.0 Voice | Full-duplex voice interaction | Natural conversation flow |

UI-TARS: The Open Secret Behind "Doubao Phone"

ByteDance's most significant technical contribution may be UI-TARS, the multimodal model powering the much-discussed "Doubao Phone" capabilities. The model enables:

- Cross-app task completion (price comparison, booking, purchasing)

- Screen understanding with structured GUI parsing

- Game playing with reasoning and action integration

- Natural language control of mobile interfaces

UI-TARS has been open-sourced, with ByteDance releasing three versions during 2025:

| Version | Release Date | Key Improvement |

| UI-TARS 1.0 | January 2025 | Initial GUI agent capabilities |

| UI-TARS 1.5 | April 2025 | Enhanced screen comprehension |

| UI-TARS 2.0 | September 2025 | Full multimodal agent reasoning |

The open-source strategy represents a significant shift: ByteDance is sharing the underlying technology while competing on product integration. This "open core, closed product" approach mirrors successful open-source business models in other software categories.

The Ecosystem Advantage

ByteDance's competitive moat extends beyond model quality to ecosystem integration. Doubao models are deeply embedded across ByteDance's product suite:

| Product | Doubao Integration | User Impact |

| Douyin | AI video generation, content recommendations | 700M+ MAU exposed to AI features |

| Jianying | AI editing, automatic captions | Professional video creation democratized |

| Trae | AI coding assistant | Developer productivity gains |

| Feishu/Lark | Meeting summaries, document generation | Enterprise workflow automation |

This ecosystem approach creates network effects that standalone AI applications struggle to match.

Section 5: Alibaba's ATH Reorganization — The Platform Play

Alibaba's AI strategy crystallized in April 2025 with the formation of ATH (AI To Home/Human) — a consolidated unit combining DingTalk, Tmall Genie, and the Quark search application under a single AI-native applications umbrella.

The Daily Release Rhythm

Following ATH's formation in mid-March 2025, Alibaba released new models at a remarkable pace:

| Date | Release | Significance |

| March 30 | Qwen 3.5-Omni | Multimodal foundation model |

| April 1 | Wan 2.7-Image | Image generation capability |

| April 2 | Qwen 3.6-Plus | Programming-focused enhancement |

| April 15 | Meoo (Miao Wu) | Zero-code AI development platform |

| April 16 | HappyOyster | Native multimodal world model |

Meoo: The "1-Minute" Promise

Alibaba's Meoo (秒悟, "Instant Comprehension") represents a bet on citizen development. The platform promises users can describe an application in natural language and have a deployable web app or H5 page ready in "as fast as 1 minute."

For enterprise AI deployment, this could significantly reduce the bottleneck of engineering resources—a constraint that has slowed AI adoption even as models have improved.

Section 6: Social Media Reactions — What People Are Saying

The April AI surge generated significant social media discourse. Here's what users across Chinese and international platforms are saying:

**Zhihu User (@AI观察者)**

"人形机器人跑完半马,这比什么实验室数据都更有说服力。机器人时代真的来了。"

*"Humanoid robots completing a half-marathon is more convincing than any lab data. The robot era has truly arrived."*

👍 2.4k | 💬 312

**X/Twitter User (@TechSinologist)**

"The contrast between OpenAI shutting down Sora and China's 20B AI videos says everything about platform economics vs standalone apps."

🔁 1.8k | ❤️ 4.2k

**Xiaohongshu Creator**

"用豆包2.0做短视频脚本,效率直接翻倍。AI视频生成太快了,剪辑都来不及。"

*"Using Doubao 2.0 for short video scripts, efficiency doubled instantly. AI video generation is so fast, editors can't keep up."*

❤️ 8.9k | ⭐ 3.2k

**GitHub Developer**

"DeepSeek's MoE efficiency is game-changing. If V4 delivers on the rumored 1M context at reasonable cost, it's a genuine paradigm shift."

⭐ 156 | 💬 47

**Douban Discussion**

"天工马拉松夺冠让人想起当年AlphaGo,但这次是中国团队。这种技术自豪感和以前完全不同。"

*"Tiangong winning the marathon brings back AlphaGo memories, but this time it's a Chinese team. This technological pride feels completely different."*

🔁 892 | 👍 2.1k

**Twitter/X (@AIResearcher)**

"People focus on DeepSeek's low training costs, but the real story is their inference efficiency. That's what enables 96M MAU on limited compute."

🔁 3.4k | ❤️ 7.8k

Section 7: Competitive Analysis — How China Stacks Up

| Dimension | China Leaders | US Leaders | Assessment |

| Video Generation | Kling, Wan 2.5, Hailuo | Sora (shut down), Runway | China leads in deployment |

| Model Efficiency | DeepSeek V3, Qwen 3 | GPT-4.5, Claude 3.7 | Near parity, China cost advantage |

| Embodied AI | Tiangong, Unitree, Fourier | Tesla Optimus, Figure | China leads in real-world testing |

| AI-Native Apps | Doubao, Kimi, Quark | ChatGPT, Claude | China higher MAU growth |

| Open Source | Qwen, DeepSeek, UI-TARS | Llama, Mistral | China more permissive licensing |

| Content Volume | 20B AI videos (2025) | ~2B estimated | China 10x advantage |

Section 8: What's Next — Milestones to Watch

The remainder of 2026 promises continued acceleration. Key developments to monitor:

| Timeline | Expected Development | Significance |

| Q2 2026 | DeepSeek V4 confirmed release | Could reset efficiency benchmarks again |

| Q2 2026 | GPT-5 rumored launch | The true next-generation test |

| Q3 2026 | Doubao 3.0 anticipated | ByteDance's response to V4/GPT-5 |

| Q4 2026 | DeepSeek Agent model | "Smart agent" architecture debut |

| 2026 | World Humanoid Robot Games | Global embodied AI competition |

Section 9: The Global Implications — A Shift in AI's Center of Gravity

April 2025's developments carry implications that extend far beyond China's borders. The convergence of these breakthroughs suggests a fundamental shift in where AI innovation happens—and who benefits from it.

The Democratization of AI Capabilities

China's AI ecosystem is increasingly characterized by accessibility and affordability:

| Capability | 2022 Cost | 2025 Cost | Democratization Impact |

| API Calls | $0.02/1K tokens | $0.0005/1K tokens | 40x cost reduction |

| Model Training | $10M+ (GPT-3 scale) | $12K (DeepSeek approach) | 800x cost reduction |

| Video Generation | Enterprise-only | Consumer apps | Mass market access |

| Embodied AI | Research labs | Public marathons | Real-world validation |

This cost curve enables innovation at scales and in geographies previously excluded from frontier AI development.

The Regulatory Divergence

China and the West are developing AI under different regulatory frameworks:

| Aspect | China Approach | Western Approach | Implication |

| Model Registration | 346 models registered (April 2025) | Voluntary disclosure | Standardized quality baseline |

| Content Labeling | Mandatory AI-generated tags | Platform-dependent | User transparency |

| Data Privacy | Consent-based, government oversight | GDPR/CCPA compliance | Different privacy norms |

| Export Controls | Limited (focused on military) | Extensive chip/model restrictions | Divergent supply chains |

China's standardized registration system (346 models approved as of April 2025) creates a baseline of quality and accountability that contrasts with the West's more fragmented approach.

The Infrastructure Race

Behind the model breakthroughs lies an infrastructure competition:

| Infrastructure Type | China Capacity | Growth Rate |

| AI Data Centers | 250,000+ racks | 35% YoY |

| Smart Computing (Petaflops) | 150,000P+ | 50% YoY |

| Edge Computing Nodes | 5 million+ | 60% YoY |

| 5G Base Stations | 3.6 million | 15% YoY |

The Jianyang project announced April 17, 2026—adding 15,000P of compute capacity—will bring the city's total to 25,000P, making it the leading AI compute hub in Southwest China.

Conclusion: The New Normal

April 2025's cascade of AI breakthroughs isn't an anomaly—it's the new baseline. China's AI industry has demonstrated it can simultaneously advance across multiple vectors: foundation models, embodied intelligence, content generation, and developer tools.

The Stanford AI Index's confirmation of China's rise to #3 in global model contributions (with Alibaba leading among Chinese companies) merely formalized what the market had already recognized. More significant is the pattern: Chinese AI companies are increasingly defining the terms of competition, whether through DeepSeek's efficiency challenges, ByteDance's platform integration, or the humanoid robot endurance tests that set new standards for embodied AI validation.

Three Takeaways for Global Observers

1. The efficiency advantage is structural, not incidental. DeepSeek's rumored $12,000 training runs aren't just cost optimization—they represent a different approach to AI development that prioritizes algorithmic innovation over brute-force compute scaling. This philosophy is spreading across China's AI ecosystem.

2. Platform integration beats standalone products. The contrast between OpenAI's Sora shutdown and China's 20 billion AI videos demonstrates that AI capabilities achieve scale when embedded in existing user workflows, not offered as separate tools.

3. Embodied AI is entering practical deployment. The robot marathon wasn't a publicity stunt—it was a validation methodology. As these robots move from marathons to manufacturing floors, warehouses, and service roles, the physical AI economy will expand rapidly.

The robot that crossed the finish line in Beijing on April 19, 2025, wasn't just completing a marathon—it was marking the starting point of a new era where Chinese AI competes not as a follower, but as a co-leader defining the future of intelligence itself.

---

Related Articles

- [MiniMax Talkie: How China's AI Companions Captured 3 Million Monthly Users](https://www.ainchina.com/blog/minimax-talkie)

- [Doubao ByteDance: The 1.2 Trillion Token Explosion Reshaping AI in China](https://www.ainchina.com/blog/doubao-bytedance)

- [China's Embodied AI Revolution: Humanoid Robots Enter the Workforce](https://www.ainchina.com/blog/china-embodied-ai-revolution-2026)

- [Stanford AI Index 2026: China's Rise to Global AI Leadership](https://www.ainchina.com/blog/stanford-ai-index-2026-china-rise)

---

*Disclaimer: This analysis is based on publicly available information, industry reports, and company announcements. Market data and user statistics reflect estimates based on disclosed figures. Investment decisions should not be made solely based on this analysis.*